yes SQL Server 2022 is coming and would have many advance features.

https://www.microsoft.com/en-us/sql-server/sql-server-2022

whats new in SQL Server 2022 would be know more here:

Bob Ward would be sharing more on data explose about SQL Server 2022

yes SQL Server 2022 is coming and would have many advance features.

https://www.microsoft.com/en-us/sql-server/sql-server-2022

whats new in SQL Server 2022 would be know more here:

Bob Ward would be sharing more on data explose about SQL Server 2022

Azure arc is another great stuff coming with Microsoft Azure, as we were expecting Azure cloud Microsoft has great DevOps options and nice for everyone.

Azure has multiple subscribtion option, where you can have Servers (Windows/Linux) and softwares include Database(s), SQL Server. with Azure MS has different features and options MS will provide support like subsribtion where MS will take care of your system/monitoring/support and you only have to use your system/software and focus on application.

So fare all Cloud provider supports the system which we deploy on their Cloud and they would be only responsible for their system.

Wiith Azure Arc Microsoft has extended that support with Hyberid system where now if you have your system -Physical/Virtual on your datacenter/local and that MS can be subsribe and take care of monitoring and other support per subsribeription, that way MS will now able to support your system even if it not on their cloud.

Azure Arc is planning to have SQL Server- in preview stage now, means things are coming where Azure will take care of all support system.

Azure arc also take care of Serverless( Kubernetes)

Azure Arc also support Other cloud system if require(Hybrid) cloud – planning.

This will add on the bussiness to Azure as they would extend their support and technologes like AI/ML and feature which Azure provides.

This is a great stuff for MS but on the other hand I am little afraid if support of the system is managed by Microsoft themself what will happen to support team.

Things are chaning on support system….

4 Steps:

or

from sklearn.linear model import logistic regression

or

logreg=logistic regression

logreg.fit(x,y)

logreg.predict(x)

In this series I would be talking mostly about Python and R, we would understand the different ML model supported in Python. Python is a great open source language with multiple provides, SQL Server ML also provides build in, I am not going in detail about python in this series. Python has multiple libraries, for ML it has SKLearn.

Using Python we can implement ML for Classification and Regression Models. Following are the Algorithms Python supports for ML

Classification:

Regression:

Classification:

Machine Learning : it is a system where system will learn how the pattern is going and accordingly we can predict the future information.

There are two types in machine learning:

Classification:

in this category it would be like – true or false. For any condition system has to predict if the output would be true or false. Standard example of ML on this would be an email would be SPAM or NO-SPAM how do we predict that.their are similar examples for it.

ML has multiple algorithms for classification.

Regression:

this is ML for prediction of the future expected value per the given data upon pattern of the input data and sample data to predict the future data on the given sample. Their are different algorithms depending upon the pattern of data and the combination of input data values.

Powershell in 2012, Powershell 4.x onwards introduced Desire State Configuration(DSC) which help to build the standard configuration/ process /template for your system state and can be use or maintain that state for compliance and standardization of our environment. this can be customized as well.

This will helpful for:

DSC has two process

There are two ways of processing DSC:

DSC requires “DSCResouces” module which generally included. the process flow is create the DSC configuration system which will create a MOF file (Managed Object Format )- metadata or configuration file. MOF file is very important for processing the DSC ,according to MOF file the desire state configuration executes and ensure to have Desire space as generated by MOF file. Powershell 5.x onwards this MOF file are default encrypted so avoid compliance and risk of having sensitive data/passwords in plain text.

Example: to create simple folder/file and ensure it exists all the time for checking every 20min.

Configuration DirCheck {

#Node name can be remote/local host

Node Node1{

#Resource type “FILE”

File DirCheck{

#Desire State check Code

Type = ‘Directory’

DestinationPath = ‘C:\DoNotDelete’

Ensure = “Present”

}

File FileCheck{

#Desire State check Code

DestinationPath = ‘C:\DoNotDelete\DND_File.txt’

Ensure = “Present”

Contents = ‘Vin’

}

}

}

DirCheck -InstanceName localhost -OutputPath “d:\dsc\DirCheck\”

Start-DscConfiguration -Wait -verbose -Path D:\dsc\DirCheck\ -computername Node1-Force

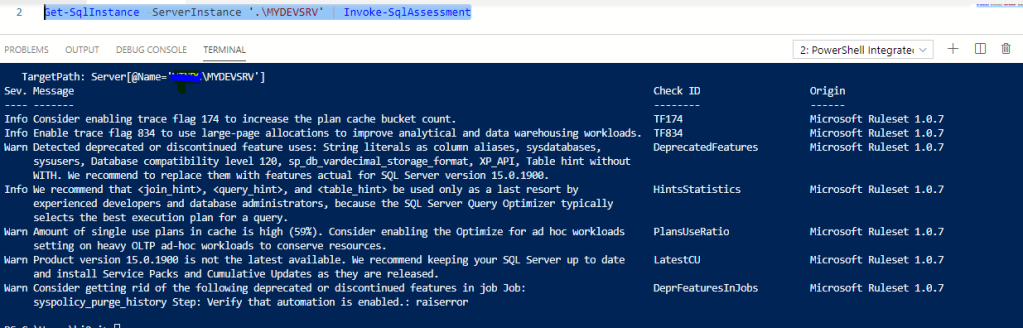

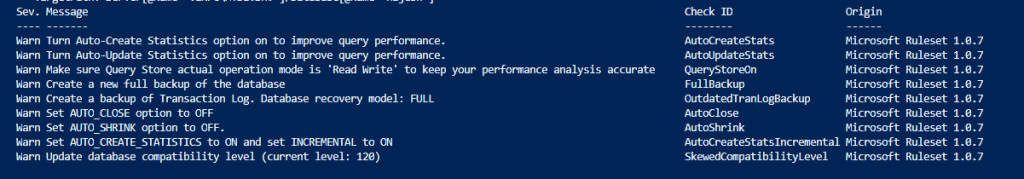

June/July 2019 Microsoft – powershell is providing another great feature with SMO and SQL Server module as SQL Assessment.

This is a great feature where Microsoft- SQL Server is providing the assessment for your sql server with general recommendation on the configuration. it would really help and solve most of the problem DBA is having and DBA has to write multiple code and difficult task for them and it is one of the most important activity DBA to perform.

I am very glad to see it. this will make DBA support at next level. used for SQL Server 2012 onwards and works for both SQL Server Windows and Linux

eg:

#To get the Assessment for Named instance ‘.\test1’ :

#Database level assement for Named instance ‘.\test1’ – testdb

This will provide all recommendation by Microsoft… Cool.

We can import this in table and can work on it.

Get-SqlInstance -ServerInstance ‘.\inst1’ | Invoke-SqlAssessment -FlattenOutput |

Write-SqlTableData -ServerInstance ‘.\inst1’ -DatabaseName ‘db1’ -SchemaName dbo -TableName table_SqlAssessment -Force

Get-SqlDatabase -ServerInstance ‘.\inst1’ -Database db1 | Invoke-SqlAssessment -FlattenOutput |

Write-SqlTableData -ServerInstance ‘.\inst1’ -DatabaseName ‘db1’ -SchemaName dbo -TableName table_SqlAssessment

Powershell is no longer been installed or delivered with windows bundle after 5.x and new powershell would be independent of windows/operating system.

powershell would be a separate system /world and would be available on gethub

Early this year we got Powershell 6 and now we have powershell 7 Preview been released.

their is tone of great stuff associated with it.

you can install it using gethub

https://github.com/PowerShell/PowerShell/releases/tag/v7.0.0-rc.1

Also unlike Azure studio would be replacement of SSMS now Powershll ISE would no longer be having enhancement and powershell would having POWERSHELL CORE 6 , POWERSHELL CORE 7 … would be released which would be platform independent…. very interesting…

So for powershell code ISE we would be having VISUAL STUDIO CODE

on Visual studio code you have to install powershell extension to use as an ISE way of look.

good to have next level of POWERSHELL…

happy Learning !!!

Today was working on SQL Server cursor

standard cursor deification would be like this:

sqlcursor from (Azure Data Studio)

—-This is the Correct way and solution for it. hope this helps.

DECLARE @db_nm varchar(20)

DECLARE @SQL nvarchar(1024)

if (@db_nm is null)

select @SQL=N’DECLARE allDB_cursor CURSOR FOR SELECT name FROM Sys.Databases’

else

Select @SQL=convert(nvarchar(250),’DECLARE allDB_cursor CURSOR FOR SELECT name FROM Sys.Databases where name =”’+@db_nm+””)

EXEC (@SQL)

OPEN allDB_cursor

FETCH NEXT FROM allDB_cursor INTO @db_nm

WHILE @@FETCH_STATUS = 0

BEGIN

print @db_nm

FETCH NEXT FROM allDB_cursor INTO @db_nm

END

CLOSE allDB_cursor

DEALLOCATE allDB_cursor

GO

Here are the different ways to know the tlog file related information

sys.dm_db_log_stats – Provide summary of tlog information

SELECT * FROM sys.dm_db_log_stats(db_id())

sys.dm_db_log_info -DMV function for VLF information same as dbcc loginfo which is non documented by Microsoft.

SELECT * FROM sys.dm_db_log_info ( db_id())

sys.master_files – Detail information for all the database – database files (data/log). with related information

replacement of sysaltfiles(old)

select * from sys.master_files where database_id=db_id() and file_id=2

sys.sysfiles – One row for each database file for current database.

SELECT * FROM sys.sysfiles WHERE fileid=2

dbcc sqlperf() – old and good dbcc to see the log size and used space for all databases.

also used to clear the stats

dbcc sqlperf(logspace)

sys.dm_db_log_space_usage – only size related information of tlog

select * from sys.dm_db_log_space_usage

select log_reuse_wait ,log_reuse_wait_desc from sys.databases where name =DB_NAME()

this helps me a lot, hth to someone.